Autonomous Revenue Architecture

How stateful machines replace manual decision-making, decoupling revenue from OpEx entirely.

Every organization is built on a hidden assumption: that human judgment and availability are the constraining resources. Hire people. Grow revenue. Hire more people. Grow more revenue. It was the only playbook that worked for decades. Campaigns multiplied. Channels proliferated. Surface area expanded. All downstream from budget and headcount. Investors funded it because the math was legible: headcount scaled linearly, revenue scaled super-linearly, margins could be cleanly measured. It dominated. But suddenly it is no longer viable. Not because it stopped working but because something faster has replaced the physics underneath it.

While many are debating the merit of replacing human jobs with AI, the reality of competing in the rapidly evolving 2026 business landscape requires a new approach to organizational design.

The machines now make business decisions faster than humans can react. Campaign planning migrated from months to minutes. A/B tests went from weeks to minutes. The observe-orient-decide-act (OODA) loop once spanned quarters. Now it spans hours. Competitors who automated their decision layer are running fifty iterations before the creative meeting starts. They are testing offers the team has not conceived yet. They compound advantage because they are skipping the human interrupt entirely.

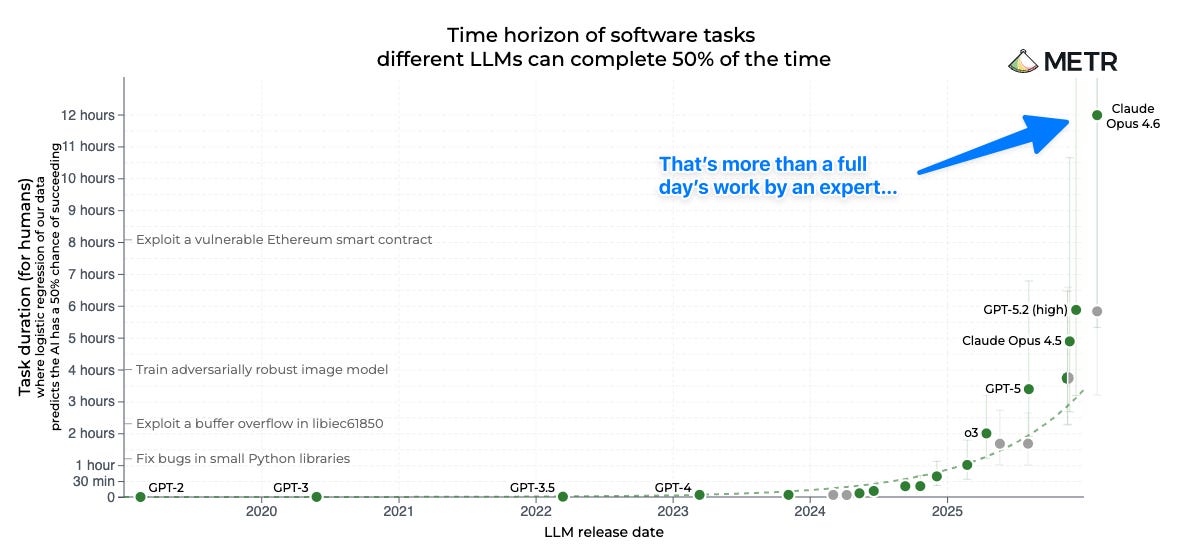

This is not theoretical. The technology debate is over. We are talking tactics now. Models like Opus 4.6 scoring this high on the METR benchmark running in a proper enterprise harness has irreversibly changed how revenue organizations scale.

Better models are not the bottleneck. They work now if the data infrastructure is ready to receive them. That readiness is the gap. A few well-crafted Claude Code sessions can stand up automated decisioning systems that compound daily. That gap does not close. Any leader who has not personally automated a decision-making function in the last month is making decisions with a stale map.

The constraint is no longer capital. It is no longer raw talent. It is implementation speed. In 2026, the separation between companies will not be who has the biggest GTM team. It will be who figured out how to decouple revenue from headcount entirely. Who built autonomous systems that observe market conditions, orient to customer signals, and execute bidding and routing decisions without waiting for human judgment to catch up.

A headcount-first GTM strategy is not “inefficient”. It is not “slow”. It is an existential liability.

The companies that figure this out will not just grow faster. They will grow cheaper. Decoupling revenue from headcount is not optimization. It’s structural. The Burn Multiple compresses. The Rule of 40 stops being a ceiling and starts being a floor. That is the prize.

The company structures and leaders that got us here are not equipped to build autonomous revenue systems. The reason is not talent. It’s incentive. The organizational structure is optimized to do the opposite of what needs to happen.

Marketers are rewarded for managing teams, negotiating vendor contracts, and shipping quarterly initiatives. They are not rewarded for building invisible infrastructure. Measurement systems do not look like wins. Data architecture does not ship to customer-facing channels. So it gets pushed back, indefinitely. Meanwhile, vendors show up with tools that promise “AI-powered marketing” and get funded because they are visible, shippable, and create the impression of modernization.

Ground truth about profitable customers is distributed across systems that were never designed to agree. It sits in the CRM, the billing system, the ad platforms, and three analytics tools that contradict each other. Nobody stitches it together. Integration work is invisible. Invisible work dies. So GTM teams are making seven-figure decisions with 30% visibility. Seventy percent of signup attribution traces to untracked channels. And that is exactly why the headcount model persists: when nobody can see, companies need people to guess.

This is not a technology problem. It is a prioritization problem. And it is structural. And the champion for it will not come from inside the existing departments.

Mastery of the old game actively prevents seeing the new one. The marketer’s entire career was built on managing headcount and channels. Architecting systems was always someone else’s job. Almost overnight, the majority of marketing spend has become a systems architecture problem. An AI decisioning system cannot optimize for a metric nobody trusts being computed in real time.

The inversion is simple: automation thrives on measurement and feedback loops. Most companies will spend 2026 buying AI copywriters, ad designers, and email optimizers. UI layers painting over plumbing filled with skeletons. They will celebrate 15% productivity gains and assume they are keeping pace. They are not. The gap is not closing. It is widening. The companies that do the hard work of connecting the data layer will discover something entirely different: modern AI, given a clear optimization target and a harness to work from, does not improve the old game. It dissolves it entirely. Those companies will operate at a fundamentally different velocity.

Here is what that velocity looks like. Two companies. Same market. Same keywords. Titan runs paid acquisition the standard way. Smart team. Weekly optimization. On Tuesday they spot a high-converting keyword cluster. They adjust bids, reallocate budget, push new creatives. Changes go live Thursday.

By Thursday, the battle was already over.

Phantom has a stateful revenue machine. It spotted those keywords six days ago. Tested them. Found the adjacent long-tails, the misspellings, the question variants, the comparison terms that convert at half the cost. Positions Titan cannot see from their tooling. By the time Titan adjusts on Thursday, Phantom has already harvested the cheapest inventory, driven up the auction price on the terms Titan just found, and moved to the next cluster. Titan bids up. Phantom is gone. Titan finds another pocket. Phantom was there first. Every time Titan reacts, the machine has already priced them out and relocated to cheaper ground. Titan is not behind by a few days. They are structurally more expensive on every keyword, every impression, every conversion. Worse, they are blind to it. Their CAC is permanently higher. Titan’s data was accurate. Its decisions were rational. It never saw the gap because Phantom got inside its OODA loop. That gap compounds daily.

This is not science fiction. It is operational reality today. The difference is not the model. It is what surrounds it.

Most companies chasing AI in 2026 are stacking elaborate instructions, chaining prompts, fighting the model into rigid behavior. This is a dead end. Prompts break on every model upgrade. They have no memory. They cannot learn or grow. The shift is from prompt engineering to stateful machine engineering.

The principle is counterintuitive: stop telling the intelligence what not to do. Build a bounded environment and unleash it. The more clever the box, the more powerful the intelligence becomes. The box holds. The intelligence compounds. The system learns. That architecture has a specific shape, and it applies everywhere a human currently stares at a dashboard (the “state”) and makes a judgment call.

The stateful machine has four components. The next piece goes deep on all of them.

In 12 to 18 months, these architectures will be a feature inside every major ad platform. Not a competitive advantage. Not a moat. A checkbox. But the ones who upgrade their architecture internally today will maintain a strong advantage thanks to cumulative learnings of their state machine.

The speed of movement becomes the moat. Early movers acquire customers at 30-40% lower CAC. They bid higher on only valuable inventory. They hit 6-month payback while competitors still report 12. They have eaten the competitors margins and current best practices won’t even detect it. The differential becomes permanent.

The revenue organization of tomorrow is small. One architect, workers managing guardrails and an exception handler. Not thirty. Not a department. A tight system.

Given sufficiently advanced intelligence, the future belongs to measurement architects and target function designers. The ones with the most taste win.

The default heuristic is that human judgement is necessary. The companies that disprove that first are not getting a head start, they are filling the only seats left when the music stops.

I am writing the next piece now. If there is a specific part of this architecture you want me to go deeper on, let me know.